Almost every morning for more than a decade, I've posted at least one image on Instagram with the tag #hotchkiss365. You can read my early "Why Wednesday" post about that decision by clicking this link. This morning, as I composed the hashtag for my caption, I got the same response I always do, which is "1000+ posts". I wondered why it hasn't changed to "2000+" or "3000+" posts. When I reach more than five thousand posts, will it offer "5K" posts, or will I forever be static at "1000+"?

That curiosity got me wondering how many posts I have. Since I started the project during the last head of school's administration and the current HoS is in his tenth year here, I knew that the number was more than 3000. (After the first year, during which I successfully completed 365 posts of views on campus, I haven't posted #hotchkiss365 unless I'm actually on campus that day. When I'm elsewhere, I post #grateful365, which serves the same positive-psychology purpose for me.)

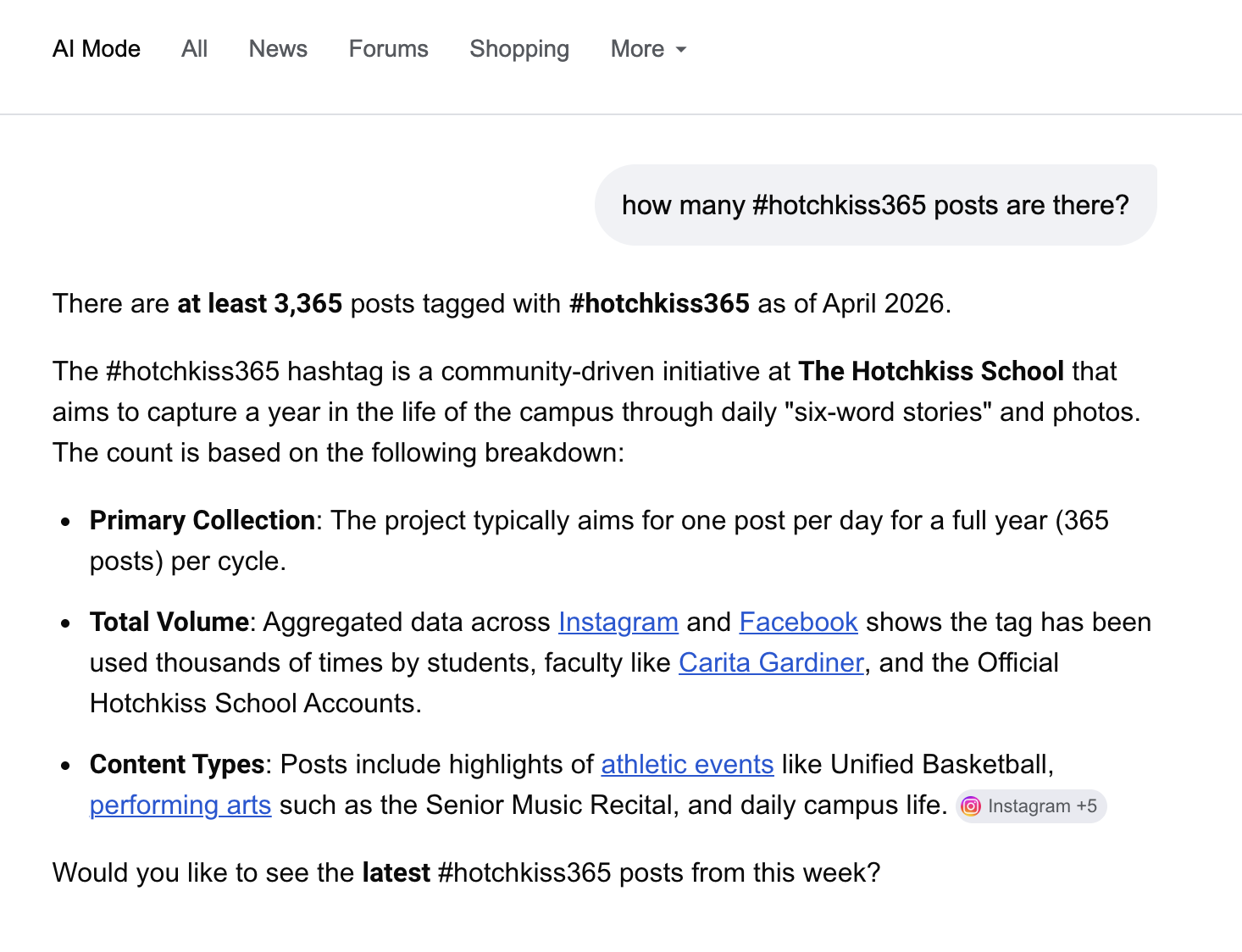

Although I haven't been one to learn how to use AI to facilitate my work in general, I recently heard a Mel Robbins podcast episode about how to incorporate AI into one's life to do many tasks better. I thought I'd see if AI could help me answer my question. The first answer I got wasn't particularly informative, but when I clicked the "Go deeper into AI mode" link, I got the answer above. Not bad.

At this point, it seems as though those of us who are not using AI for any of our daily tasks are like those people at the turn of the last century saying, "Horse and buggy has been working for me so far, so I'm not going to switch to one of those horseless carriages!" Okay, Boomer (or whatever the 1899 version of that was called), that's your choice, but you're going to get where you're going slower and spend a long time reshoeing your horses.

There are tasks that AI clearly does quicker/better than humans can on their own, which makes it tricky to figure out how/when/where it's better to use it. As a teacher, of course, I worry about how much and what my students are learning if they rely on AI and LLMs to do their reading and writing and thinking for them. A kid could reasonably ask, "What are five smart comments I could make in class about chapter six of Brave New World?" and never have to crack the book to participate "thoughtfully" in class discussion and write a decent essay. BUT, what's that kid learning? How is he/she/they growing into a more sophisticated thinker? What life path is that kid going to be better at than the computer? Should I force kids to write all essays by hand and in my presence so that I know they've done the thinking? OR is making them craft their own ideas putting them behind people who can evaluate what comes out of LLMs?

All of these changes to what's available force other teachers and me to question our goals. Does the process matter or merely the result? If we encourage kids to use all of their resources to come up with the best result they can, are we turning them into the mental equivalent of the lazy chair-sitters from Wall-E?

What do you think about AI? Please share your responses in the comments.

[Click the link above to read the site.]

As a dean, I've heard a lot of kids say versions of the following:

- I used AI ONLY to come up with my outline.

- I used AI ONLY to help generate a list of ideas.

- I wrote the whole essay myself; then, I used AI ONLY to clean up the writing.

Aren't these skills the exact ones we want kids to have? And where do these uses of AI leave creativity and nuance? Since AI's function is to collate what's already be put on the internet, it cannot generate anything new. ONLY HUMANS can do that, and only if they're trained to. Isn't it our responsibility to make sure kids can create?

So what's AI good for? Certainly, I'm impressed with its capacity to plan a vacation, help figure out what questions to ask when meeting with a lawyer or business person, write a will, etc. Those are great reasons to seek the "wisdom" of everything that's already been on the Internet. But when we want/need to come up with something new, we've got to do that on our own. AND we've got to teach the next generations how to do that on their own, too. Now, can you please help me convince them?

Please share your responses in the comments.

When we went to NYC, one of the panelists talked about using AI as a tool and not a crutch. This seemed like a good distinction for me with the kids. You need to be able to think and understand, but it can help you offload some menial tasks or start a search.

I really like this way of thinking about it. People need to learn to use actual intelligence so that they can decide when and if and how to use the artificial kind.